Yahel Oren

Data Scientist

llms.txt in 2026: The Full Guide

Bots Aren't Fetching It Yet. Here's Why It's Still a B2A Must-Have.

We analyzed over 500M LLM bot traffic events across the brands we monitor at Limy. One finding stands out: AI search crawlers are almost never fetching /llms.txt. GPTBot, ClaudeBot, PerplexityBot, OAI-SearchBot, and Google-Extended overwhelmingly skip the file and crawl HTML directly. That's the empirical reality in May 2026, and it doesn't match the noise in the GEO space.

It also doesn't change our recommendation. We tell every brand we work with to ship one.

The reason is simple: llms.txt is not the SEO play it's being sold as. It's a Business-to-Agent (B2A) play - the first standardized way for a brand to publish a machine-readable surface that AI agents can route on. The companies treating it like the next meta-keywords tag are measuring the wrong outcome. The companies treating it like infrastructure for the agentic web are measuring the right one.

This is the honest Limy guide. What llms.txt does and doesn't do, where it's already working, the use cases that justify shipping it across industries, how to implement it without wasting effort, and a citation-friendly FAQ at the end.

What llms.txt actually is

llms.txt is a proposed standard, introduced by Jeremy Howard of Answer.AI in September 2024, for a Markdown file at the root of your domain - https://example.com/llms.txt - that gives AI systems a curated index of your most important content with one-line descriptions.

There's also llms-full.txt, a longer companion that embeds the actual content of those linked pages so an agent can ingest everything in a single fetch.

The technical pitch is clean: HTML is noisy for LLMs. Navigation, JavaScript, ads, and tracking pixels burn through the context window before the model reaches anything useful. Companies serving Markdown instead of HTML have reported up to 10x token reductions, which translates directly into faster, cheaper, more accurate agent behavior.

That's the what. The interesting question is who's reading it.

The bad: what every honest study has found

If you're shipping llms.txt to improve AI search visibility - to get cited more in ChatGPT, Perplexity, Claude, Gemini, or Google AI Mode - the evidence is brutal.

Adoption is low and not growing fast. A SE Ranking study of 300,000 domains found a 10.13% adoption rate. After eighteen months of industry conversation, llms.txt is on roughly one in ten sites.

Crawler interest is negligible from the search/answer bots. We monitored over 500M AI bot visits across a 90-day window - only 408 targeted llms.txt directly. That's neglectible for all AI crawler traffic.

Google has said no, on the record. In July 2025, Google's Gary Illyes confirmed Google doesn't support llms.txt and isn't planning to, and John Mueller compared it to the discredited keywords meta tag. No major LLM provider - OpenAI, Anthropic, Google, Meta, Mistral - has publicly committed to using llms.txt as a signal in their production search or answer surfaces.

This matches what we see at Limy. Across the 515,382,577 LLM bot traffic events we analyzed, filtering for the user agents that actually drive citations - GPTBot, ClaudeBot, PerplexityBot, OAI-SearchBot, Google-Extended - the share of requests touching /llms.txt is statistically negligible. The file is almost untouched by the bots that matter for AI search visibility.

That's the honest answer to "will llms.txt improve my AI search rankings?" Today, in 2026: no. Anyone selling you llms.txt as a GEO ranking factor is selling you something the data doesn't support.

So why ship one?

The good: llms.txt is a B2A protocol, not a GEO protocol

Here's the reframe that changes the math. The same file that does almost nothing for ChatGPT search citations is doing real work in a different layer of the AI stack: the agentic web - the layer where AI agents act on behalf of users, fetch context, choose tools, and complete tasks.

This is what Limy calls Business-to-Agent (B2A). Just as B2B brands once needed websites that humans could navigate, and B2C brands needed mobile apps that humans could tap, every brand in 2026 needs surfaces that agents can route on. llms.txt is the first widely-adopted B2A standard.

IDE agents fetch llms.txt routinely. Cursor, Windsurf, Claude Code, GitHub Copilot, Cline, Aider - they all look for /llms.txt and /llms-full.txt when pointed at a documentation site. The standard playbook is: agent identifies which dependency owns a feature, fetches that library's llms.txt, then pulls only the relevant linked pages before writing code.

MCP servers are built around it. LangChain shipped mcpdoc, an open-source MCP server that exposes llms.txt files to host applications like Cursor, Windsurf, and Claude Desktop, giving the user a fetch_docs tool to read URLs listed in any provided llms.txt. The file is the routing layer for the entire interaction.

Documentation platforms ship it by default. When Mintlify rolled out llms.txt support across all docs sites it hosts in late 2024, thousands of sites - including Anthropic and Cursor - gained the file overnight. Fern, GitBook, Vercel Docs, Supabase Docs, Yoast, and Rank Math now ship it as default.

AI search / answer engines | Agentic web (IDE agents, MCP, tool-use) - B2A | |

|---|---|---|

Does llms.txt help? | Not measurably | Yes, materially |

Who fetches it? | Almost nobody (today) | Cursor, Claude Code, Copilot, Windsurf, MCP servers |

Why? | Search bots already crawl HTML fine | Agents have narrow context windows and need clean Markdown |

Time horizon for value | Speculative - depends on provider adoption | Today |

Where B2A surfaces matter today

llms.txt is most often discussed in the context of developer tools, because that's where the agentic-web stack matured first. But the B2A pattern generalizes well past dev tools, and we're already advising clients across categories on how to think about it.

1. SaaS and developer platforms

The mature case. Stripe, Vercel, Cloudflare, Anthropic, Coinbase, Pinecone, Cursor, and most modern API products ship llms.txt because their users are building with AI coding assistants right now. A well-curated file is the difference between Cursor generating working integration code and Cursor hallucinating an endpoint that doesn't exist. For this category, llms.txt isn't optional - it's a developer-experience requirement.

2. E-commerce and agentic commerce

The fastest-growing use case. As agents start shopping on behalf of users - "buy me running shoes under $150 that ship by Friday" - they need a clean, machine-readable surface for the catalog, pricing rules, shipping policies, and availability. The brands that ship llms.txt pointing agents to canonical product pages (instead of letting them parse cluttered category HTML) will be the brands agents can actually transact with. This is one of the clearest Provider Gap opportunities we see across the Limy book: most enterprise retailers are not yet shipping agent-readable surfaces.

3. Financial services and insurance

Agents comparing investment products, mortgage rates, or insurance policies need factual surfaces - current rates, current terms, current eligibility - not marketing landing pages. llms.txt is how a financial brand directs an agent away from the conversion-optimized hero section and toward the regulator-approved factsheet. For categories where accuracy is regulated, this is also a compliance asset, not just a visibility one.

4. Pharma and healthcare

For markets like Israel - where pharma content is governed by frameworks like נוהל 134 and 137 - llms.txt is a way to direct AI assistants to the compliant patient-information surfaces rather than the promotional ones. The same logic applies in any regulated health vertical: the agent needs to find the approved page, not the SEO-optimized one.

5. Travel and hospitality

Agents booking on behalf of users need access to live policies - cancellation rules, baggage allowances, loyalty terms, room categories. llms.txt is the routing layer that gets the agent to the canonical policy page instead of a landing page designed for human conversion. As travel agents (the AI kind) scale, hotels, airlines, and OTAs without clean B2A surfaces will lose share to those with them.

6. B2B and enterprise software

Sales-research agents - increasingly common inside CRMs and procurement tools - fetch vendor sites to summarize pricing, integrations, and case studies. A well-structured llms.txt that points at the right product pages, the right pricing tier comparisons, and the right ROI proof points materially changes what the agent reports back. This is one of the cleanest Limy use cases: helping a B2B brand be summarized accurately when a buyer's AI does the first round of vendor diligence.

7. News, research, and publishing

For publishers, llms.txt is a way to direct AI engines toward canonical reporting versus thin SEO content. It doesn't fix the citation problem on its own - citation share is driven by the broader Limy 7-pillar framework, not by a single file - but it's a useful supporting surface for publishers who want agents grounding answers in their authoritative content rather than aggregator pages.

The common thread: in every category where an agent might fetch content from your domain on a user's behalf, llms.txt is the cheapest piece of B2A infrastructure you can ship.

How to build llms.txt correctly

Here's the constructive walkthrough - the format spec, an annotated template, and the step-by-step we use when we build these for clients.

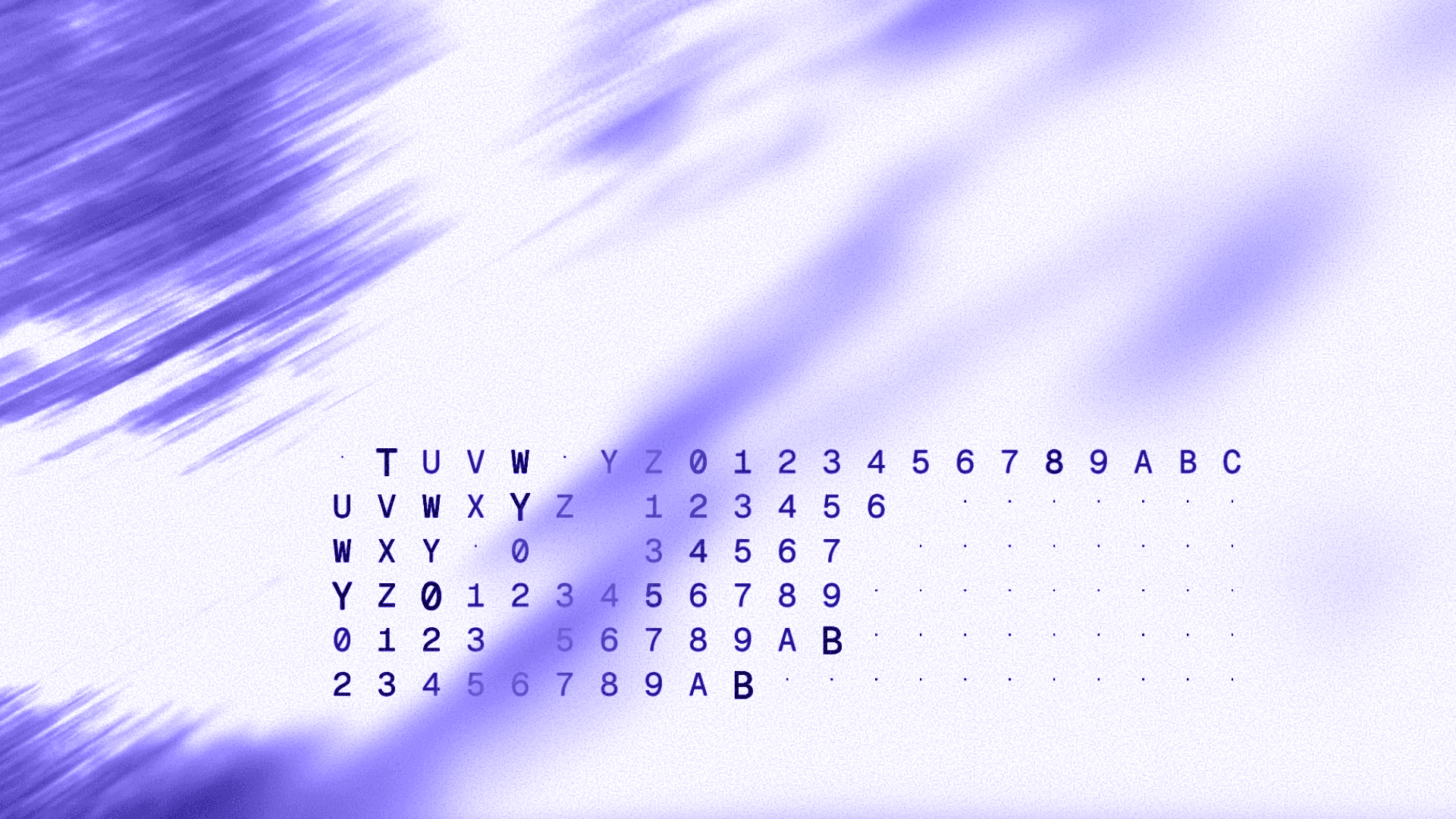

The format

llms.txt is a Markdown file with a specific structure. The Answer.AI spec is loose, but the convention that's emerged across Mintlify, Fern, Stripe, Anthropic, Cloudflare, and the rest of the dev-tool ecosystem looks like this:

# Brand or product name

> One-sentence summary of what this brand/product is and who it's for.

Optional paragraph or two of additional context. Useful if the brand name

is ambiguous, or if the agent needs to know what category you're in.

## Section name

- [Page title](https://example.com/page): One-line description of what's on this page and when an agent should fetch it.

- [Another page](https://example.com/another): Another one-line description.

## Another section

- [Page title](https://example.com/page): Description.

## Optional

- [Lower-priority page](https://example.com/extra): Pages an agent can skip if context is tight.

Four structural rules to internalize:

One H1, containing the brand or product name. Not a slogan, not an SEO title - the literal name.

A blockquote summary immediately after the H1. This is the agent's one-second understanding of what you are.

H2 sections group links into logical categories.

Link lines follow the exact format:

- [Title](URL): Description.- title in brackets, URL in parens, colon, description. Agents are parsing this; deviations break parsing.

The ## Optional section is a convention with semantic meaning: any agent reading the spec knows it can deprioritize that section under context pressure. Put low-signal pages there.

An annotated example

Here's a complete llms.txt for a fictional B2B SaaS - close enough to a real category that you can map it to your own brand:

A few things to notice in that example:

The H1 is just "Limy" - no taglines.

The summary is one or two sentences, in agent voice ("Northwind is..."), not marketing voice ("The world's leading...").

Descriptions tell the agent what is on the page and when to fetch it ("how Northwind generates per-account health scores from product, billing, and CS signals"). That's the routing signal.

Pricing tiers are surfaced with actual numbers. This is the kind of factual detail that gets summarized accurately when an AI agent reports back to a buyer - and that gets hallucinated when it doesn't.

The Optional section holds low-signal pages (blog, press kit, about) that an agent can skip if it's tight on tokens.

The build process, step by step

This is the process we run with clients. It takes roughly half a day of focused work.

Step 1 - Identify the canonical pages. Open your sitemap or top-pages report and pick the 20–50 URLs that an agent would actually need to answer questions about your brand. Product pages, pricing, integrations, key customer stories, top-level docs. Skip blog posts unless they're evergreen pillar content. Skip career pages, gated landing pages, paid-traffic destinations. The selection is editorial - be opinionated.

Step 2 - Group into 4–7 sections. Common groupings: Product / Pricing / Integrations / Customers / Documentation / Company. Use whatever groupings match your IA - but resist the temptation to invent twenty sections to make the file look complete. Agents parse fewer, deeper sections better than many shallow ones.

Step 3 - Write the H1 and summary. Brand name as H1. Summary as a blockquote, one or two sentences, third-person, agent-readable. Add an optional paragraph of context if the category isn't obvious from the name (Northwind, Stripe, Anthropic, Limy - all need that paragraph; Tesla, Coca-Cola - probably don't).

Step 4 - Write descriptions. For each link, one sentence. What's on the page, and why an agent would want it. Specifics beat generics: "Starter ($500/mo), Growth ($2,000/mo), Enterprise (custom)" beats "Affordable pricing for every team." If you can include a concrete fact in the description itself, do - agents may use the description as standalone context without ever fetching the URL.

Step 5 - Decide on llms-full.txt. If your most important content is documentation (you're a SaaS, dev tool, or API product), ship llms-full.txt as well - concatenated Markdown of every linked page, designed for agents that want to ingest everything in one request. If your content is mostly marketing pages, llms.txt alone is enough. Mintlify, Fern, and most docs platforms generate both automatically.

Step 6 - Deploy to the root. The file must live at https://yourdomain.com/llms.txt - served as text/plain or text/markdown, status 200, no auth wall. Same goes for llms-full.txt if you ship it. For multilingual sites, the convention is one per language root: /en/llms.txt, /he/llms.txt. Some sites also mirror at /.well-known/llms.txt - supported, but the root is canonical.

Step 7 - Audit robots.txt alongside it. Confirm that the AI user agents you want fetching the file aren't blocked: GPTBot, ClaudeBot, PerplexityBot, OAI-SearchBot, Google-Extended, Applebot-Extended, and Bytespider if you're comfortable with Meta/ByteDance training. We've fixed this misconfiguration on more enterprise sites than is comfortable.

Step 8 - Test with a real agent. Open Claude Code or Cursor, paste the URL of your llms.txt, and ask the agent a question that should be answerable from the file ("What does Northwind charge?", "Which CRMs does Northwind integrate with?"). If the agent answers correctly without follow-up fetches, your descriptions are working. If it hallucinates or asks for the wrong page, your descriptions need more specificity.

Step 9 - Instrument your CDN. Set up bot-traffic filtering on Cloudflare, Fastly, or your CDN of choice to track hits to /llms.txt and /llms-full.txt by user agent. This is your single most useful measurement signal once the file is live.

Tools that generate llms.txt for you

If you don't want to hand-author it:

Mintlify, Fern, GitBook, Vercel Docs, Supabase Docs - generate llms.txt and llms-full.txt automatically for hosted documentation.

Yoast SEO, Rank Math - generate llms.txt for WordPress sites using sitemap data.

Website LLMs.txt plugin (30,000+ installs) - auto-generates the file and tracks GPTBot/ClaudeBot access for WordPress sites not using a major SEO plugin.

For brand sites that aren't on any of these platforms - most enterprise marketing sites, custom e-commerce builds, headless setups - hand-authoring is still the right approach. The file is small, the editorial judgment matters, and auto-generators tend to over-include.

Best practices and common mistakes

A short list of what separates a working llms.txt from a noisy one.

Keep it curated. 20–50 high-value links. Dumping the whole sitemap is the most common implementation failure.

Write descriptions for context, not SEO. "This explains our pricing tiers and what each includes" beats "Affordable enterprise SaaS pricing solutions." Agents read this to decide what to fetch next, not to rank you.

Don't create indexable Markdown duplicates of every page. Implementations that auto-generate .md copies of every site page can introduce duplicate content at scale, dilute crawl budget, and suppress rankings for the originals. Either keep Markdown copies non-indexable, or serve them at a canonical .md URL that points back to the HTML version.

Update quarterly. Stale links to deleted pages signal an unmaintained site. Once a quarter is enough; do it whenever you publish major content or restructure.

Don't gate it. llms.txt at the root, served clean, no login wall, no rate-limiting that blocks the bots you want fetching it.

Don't include duplicate links. If the same URL belongs in two sections, pick one. Agents parse the first match.

Skip pages that require JavaScript to render. llms.txt is for canonical content surfaces. SPA-rendered pages that return empty HTML to a non-JS fetcher are a poor target - point agents at the prerendered or static version.

Monitor your CDN logs. This is the step most teams skip. After shipping, watch logs for hits to /llms.txt from known AI user agents. That data is more useful than any general study - it's your bots, your file, your baseline.

FAQ

Will major LLM providers ever support llms.txt as a ranking signal?

Unclear. Adoption could go either way. What's clear is that the cost of being wrong is asymmetric: shipping it costs half a day, while not shipping it on the day a major provider flips the switch costs you a head start on every brand that did.

Does Limy build llms.txt files for clients?

llms.txt is one surface inside Limy's broader Generative Engine Optimization framework. We build the file, audit robots.txt alongside it, identify the Provider Gap opportunities across your category, and instrument the CDN logs to measure what AI agents actually fetch. The file alone won't move citation share - the 7-pillar framework around it does - but in 2026 every serious GEO program ships one.

The bottom line

llms.txt in May 2026 is not a GEO ranking factor. Our analysis of 515 million LLM bot traffic events and every independent study confirm it. Brands shipping llms.txt hoping for a citation boost in ChatGPT or Perplexity will be disappointed, and any agency promising that lift is overselling.

llms.txt is B2A infrastructure. IDE agents, MCP servers, and the broader agentic-web stack fetch it constantly. That layer is growing fast, the cost of shipping is trivial, and the day a major answer engine flips the switch - if it ever does - you're already there.

Ship it. Keep it short. Audit robots.txt alongside it. Measure your own CDN logs instead of trusting general studies. And keep the strategic work - the on-site authority, the ecosystem signals across Reddit and review surfaces, the Wikipedia presence, the third-party citation channels - where the actual visibility gains live.

That's the honest GEO position in 2026, and it's the one we hold with every brand we work with.

Limy helps brands win visibility across AI search and the agentic web. We track citation share across ChatGPT, Perplexity, Claude, Gemini, and Google AI Mode, identify Provider Gap opportunities by category, and ship the B2A infrastructure - including llms.txt - that gets brands cited where it counts. [Talk to us.]

Most Viewed Articles