Your attribution model is measuring the wrong channel. A growing share of your pipeline is being decided inside AI systems before a user ever clicks. Buyers are no longer navigating trackable journeys across ads or search results. They are asking AI systems what to buy, who to trust, and which product to choose, and making decisions based on those answers. By the time they reach your site, the decision is often already made.

82% of marketers don’t feel confident in their attribution data. That uncertainty now sits alongside rising acquisition costs, stricter privacy environments, and increasing pressure to prove ROI. Marketing attribution was built for a click-based internet, but that model no longer reflects how decisions are made. What you need now is a framework to measure influence within AI systems.

What Is Marketing Attribution?

Marketing attribution is the process of assigning value to the touchpoints that contribute to a conversion. Traditionally, this meant tracking interactions across channels (paid search, organic traffic, email, social) and distributing credit using models like last-click or multi-touch attribution.

These models assume you have visibility into every journey, but that assumption no longer holds. Attribution frameworks work for an internet where user behavior is observable, as every click, visit, and conversion creates a measurable trail. In an agentic environment, that trail disappears, along with critical signals like AI brand visibility, where influence occurs within generated recommendations rather than in trackable interactions.

Why Traditional Attribution Models Break in an AI World

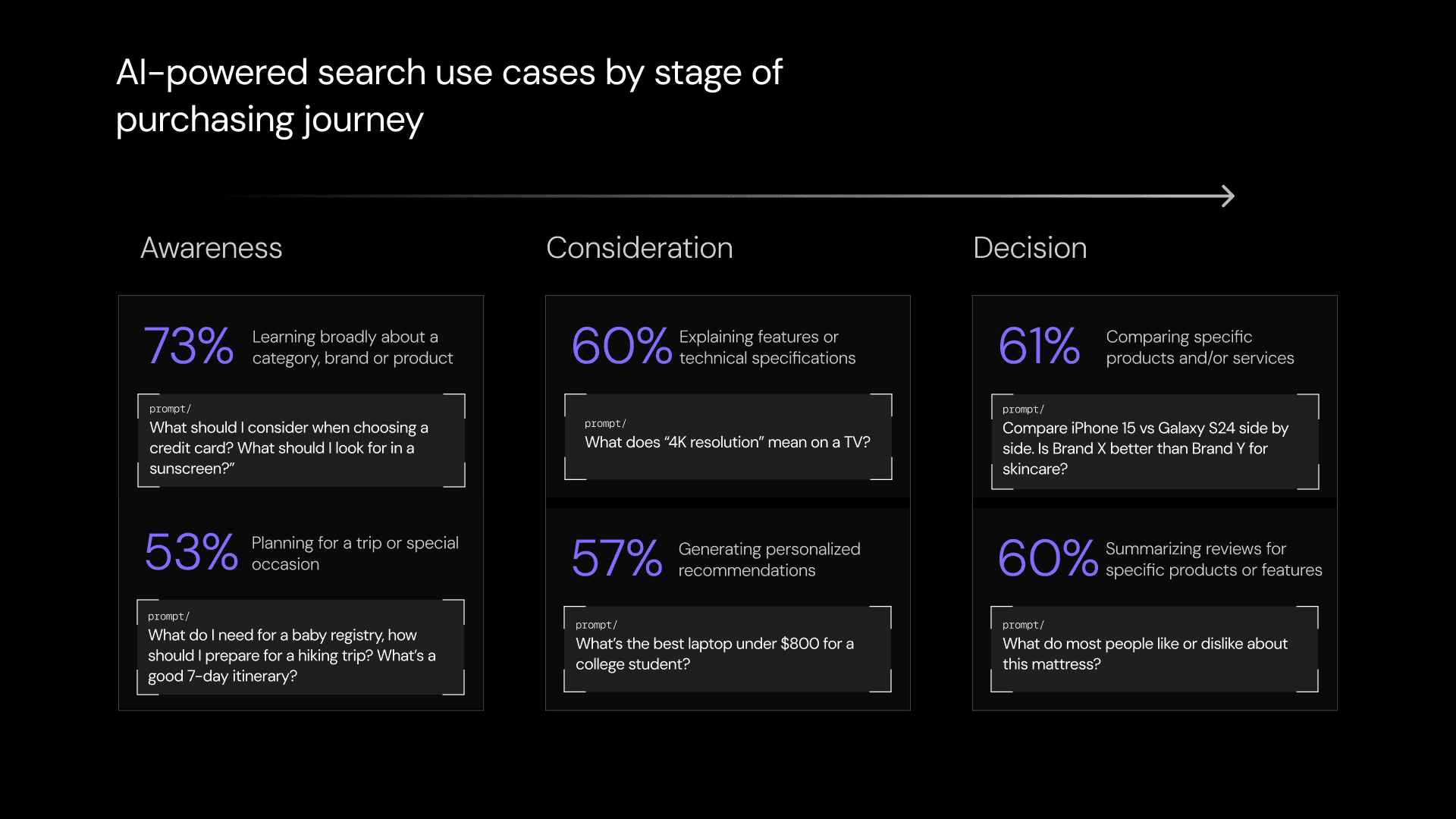

According to McKinsey, around 40-55% of consumers in top sectors (consumer electronics, grocery, travel, wellness, apparel, beauty, and financial services) use AI-based search to make purchasing decisions.

When users turn to systems like ChatGPT or Gemini for recommendations, a significant portion of the evaluation process occurs within the agent’s response itself. Instead of navigating multiple sources, comparing options, and gradually forming a decision, users are presented with a synthesized answer that effectively compresses the journey into a single interaction.

For example, a user asks ChatGPT, “Best CRM for SaaS startups.” They receive a shortlist of recommended tools, click one of the options, and sign up. In your analytics, this appears as a direct visit or branded search. In reality, the decision was made entirely inside the AI-generated response, before the user ever reached your site.

By the time a user arrives on a website, the majority of the decision-making process has already occurred. There is no browsing phase or observable evaluation process; effectively, the funnel collapses. Attribution systems, however, capture only the final visit, with little to no visibility into the earlier influences that shaped it.

Agent-generated recommendations are increasingly shaping decisions before any trackable interaction occurs. The result is a set of critical blind spots:

No visibility into AI-generated recommendations

No tracking of influence before the first click

No connection between AI mentions and downstream conversions

You may already be driving decisions through AI-generated recommendations without seeing any of it in your attribution data, which means you’re allocating budget based on an incomplete view of what is actually influencing conversions.

Traditional vs AI-World Marketing Attribution

Feature | Traditional Attribution | AI-World Attribution |

Primary Trigger | Click | Prompt |

Attribution Unit | Session | Prompt / Recommendation |

User Journey | Linear, multi-touch | Compressed, AI-mediated |

Visibility | High (referrers, UTMs) | Low (“dark” influence) |

Data Source | Clickstream data | Agent behavior + prompt data |

Success Metric | CTR, CPC, conversions | Share of model, recommendation frequency, revenue impact |

Optimization Lever | Keywords, channels | Recommendation inclusion, prompt visibility |

There is a shift from B2C to B2A (business-to-agent). Brands are no longer marketing only to humans. They are marketing to the agents acting on their behalf. This creates a new discipline: Agentic Marketing. Marketing on channels where agents discover, evaluate, and recommend products, and increasingly act on behalf of buyers.

Traditional attribution models were built for human-visible journeys. Agentic Marketing happens inside systems that do not expose that journey. If you are not measuring inside the agent layer, you are not measuring the channel at all.

9 Marketing Attribution Recommendations for an AI World

1. Shift from Channel Attribution to Agent-Based Measurement

Traditional channel attribution no longer reflects how decisions are made. In an agentic environment, agents sit between the buyer and the brand, acting as a new layer in the funnel before any measura ble interaction occurs.If your attribution model relies on clicks, sessions, or referrers, it systematically misses this layer entirely.

A practical starting point is to identify signals that indicate agent-driven influence, even when the agent layer itself is not directly measurable. Direct traffic spikes without campaign activity, rises in branded search, unusually short paths to conversion, and landing page concentration around comparison or decision-stage content all point to earlier agent-driven influence.

Classify this behavior as agent-influenced demand and treat it as a proxy for activity happening inside the agent layer. This gives your team a more accurate view of how demand is being created and reduces the risk of under-valuing the channels and content shaping decisions before the click, particularly across different stages of the product development lifecycle, where influence is often established long before conversion.

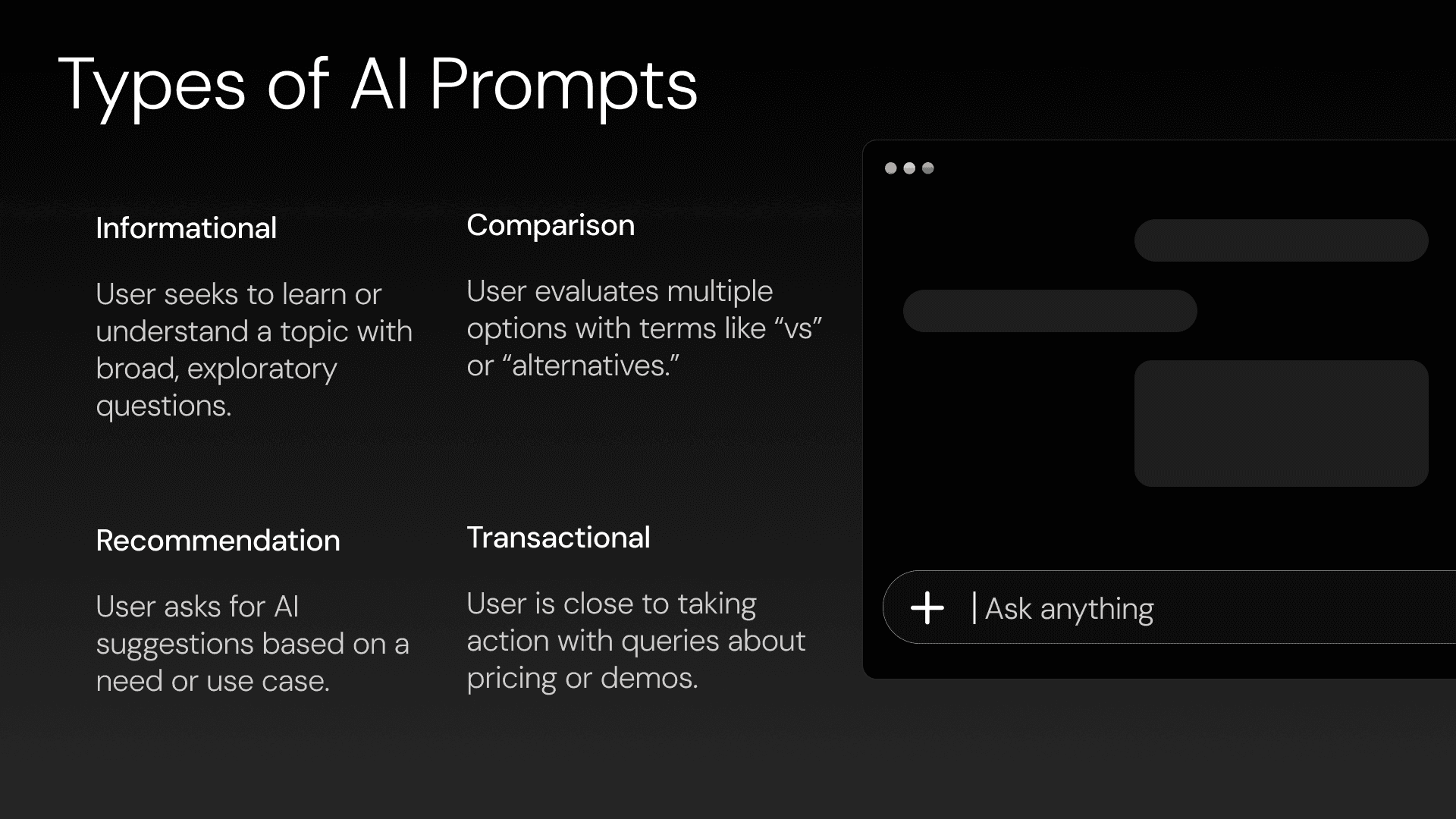

2. Start Capturing Prompt-Level Intent Data

Understanding which prompts surface provides direct insight into user intent at the moment of decision-making. But this tracking cannot be done manually at scale. Prompt discovery, clustering, and tracking require continuous monitoring across models like ChatGPT, Gemini, and Perplexity, which is why most teams default to partial or inconsistent visibility.

Most tools attempt to solve this by tracking prompts or simulating LLM outputs. That approach is incomplete, as it captures what users ask, not what agents actually do. Choose a platform that operates at the agent layer, monitoring prompt-level visibility across major AI systems and groups that data into usable categories such as “best tools,” “alternatives,” “comparison,” or “recommendations.” The platform should then match the clusters to funnel stages so attribution models can distinguish between consideration-stage visibility and decision-stage influence. This data will give you a solid foundation for a scalable AI and LLM optimization strategy.

3. Define AI Visibility as a Core Performance Metric

Treat AI visibility as a performance metric. If a brand is repeatedly recommended in high-intent AI queries, that is a sign of influence at a decisive stage in the journey. Conversely, if competitors dominate those prompts, that absence has clear commercial implications. To operationalize this, establish a small set of measurable KPIs, such as:

How often the brand appears across monitored prompts

The percentage of high-intent prompts where it is recommended relative to competitors

How prominently it appears in the answer

Whether your brand is a top recommendation, one name on a shortlist, or a marginal mention, it makes a meaningful difference to downstream impact. Those visibility signals then need to be correlated with performance data. Analyze traffic changes, conversion rates, and pipeline growth alongside changes in AI visibility.

4. Connect AI Recommendations to Downstream Revenue

If you can’t connect AI recommendations to revenue, you’re optimizing blindly. Build a multi-stage attribution structure that connects Agentic-driven discovery to measurable outcomes. In practice, this requires creating an AI-influenced segment using multiple signals rather than relying solely on referrers.

Teams also need to identify “direct-like” sessions that match AI-influenced patterns, track shared AI links where possible, and incorporate self-reported AI discovery into forms, surveys, and sales workflows. CRM records should then be enriched with these signals so that you can compare AI-influenced leads with non-AI leads on conversion rate, CAC, and time to convert, and better understand how AI-driven discovery reduces customer acquisition cost.

Limy is the Agentic Marketing Stack. It operates at the infrastructure layer, capturing real agent behavior rather than inferred signals. While most tools track prompts or simulate AI responses, Limy sits inside the agent data flow, showing what agents actually access, interpret, and use to make recommendations.

By connecting prompt-level exposure, agent behavior, and on-site interactions to revenue, Limy allows teams to measure the channel that traditional analytics cannot see.

5. Instrument Your Stack for AI Attribution

The goal is directional visibility into AI influence. Update your analytics and CRM setup, so AI influence becomes measurable even when the referrer is missing. The goal here is to create enough structured data to make AI-influenced demand visible in the systems teams already use to manage pipeline and performance. Start with relatively lightweight changes:

Links under your control should follow clear UTM conventions.

High-intent topics such as comparisons, alternatives, and “best of” terms should have dedicated landing paths where possible.

Forms should include an “AI discovery” field with specific options, and those responses should flow into the CRM as lead-source enrichment.

You should also look beyond the analytics front end and use an advanced tool to examine server logs or bot access patterns to understand what AI agents are actually crawling and extracting. The server-side shows whether AI systems are accessing important pages and whether the technical conditions for AI discovery are in place.

6. Segment AI Traffic as Its Own Channel

Separate agentic-driven traffic into its own attribution channel rather than diluting it across the direct, organic, and referral buckets. As long as AI-influenced users are blended into legacy channel groupings, their behavior becomes difficult to analyze, and their commercial value remains easy to overlook.

Create a dedicated AI traffic segment in the analytics stack. Include identifiable traffic from generative engines where possible, as well as sessions that exhibit recognizable AI-influenced characteristics. Once that segment exists, your team can compare its engagement profile against other channels by looking at session depth, landing page patterns, time on site, conversion rate, and time to conversion.

This analysis is important because AI-driven users often behave differently. In many cases, they arrive better informed and convert with fewer visits. If that pattern holds, attribution should reflect it, and budget planning should follow it.

This is also where most attribution systems fail. They are not connected to the agent layer, which means they cannot see how AI systems access or interpret content. Without visibility into agent behavior, attribution remains incomplete by design.

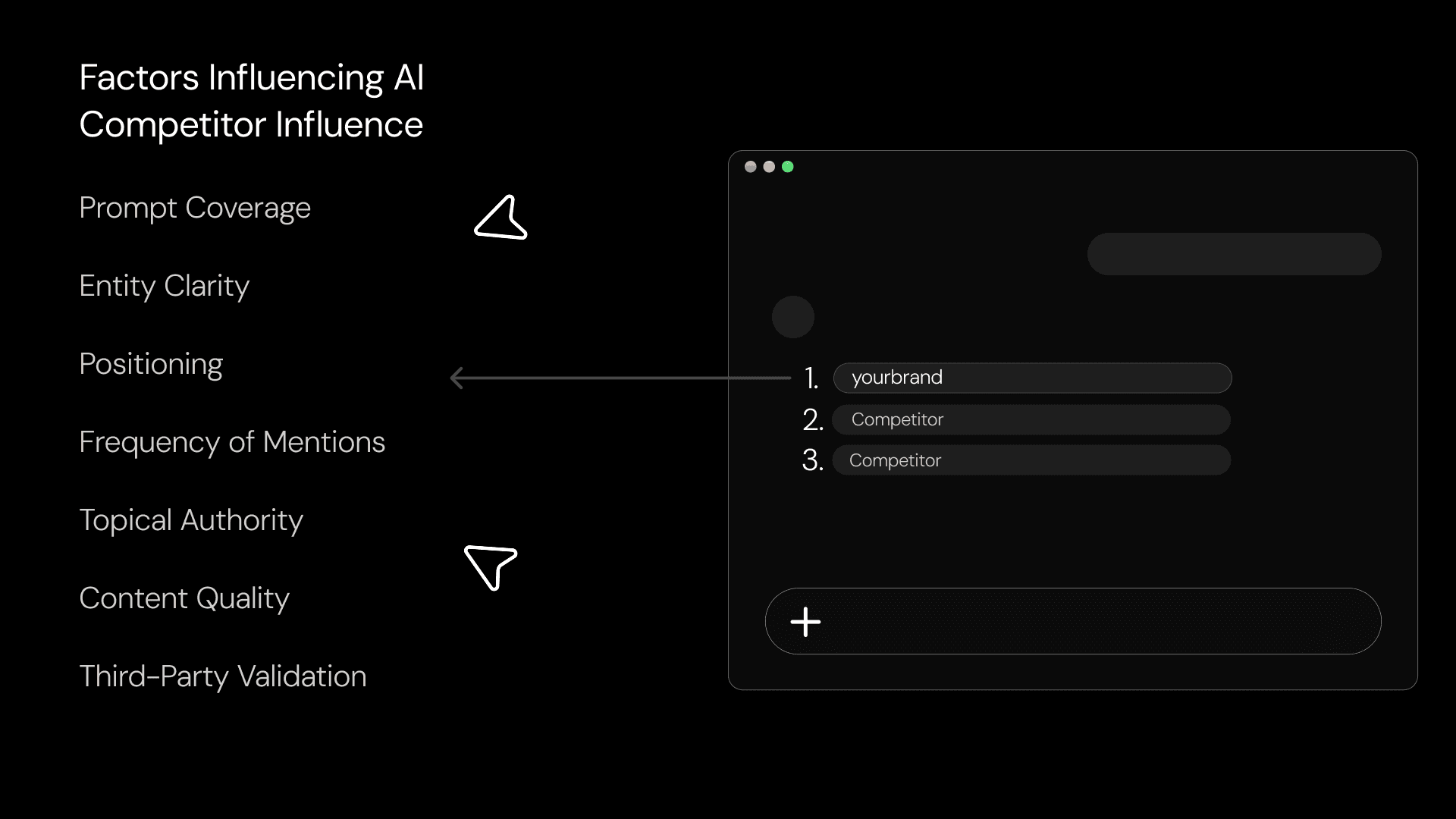

7. Account for Competitor Influence

Attribution should also account for where competitors are influencing decisions in your place. If competitors are consistently recommended in high-intent prompts, they are shaping decisions you never get to compete for.

A useful way to operationalize this is to introduce a “lost influence” metric. Teams should track competitor presence across high-intent prompts, assess how frequently competitors appear, and note whether they are positioned more prominently. They should also identify prompts where their own brand should reasonably appear but does not.

Feed this into pipeline analysis, win/loss reporting, and attribution modeling. If competitors consistently show up in the prompts that matter most at the decision stage, that absence is an attribution problem. In an agentic marketing environment, content is no longer written only for users or search engines, but for the systems that evaluate and recommend on their behalf.

8. Optimize Content for AI Extraction

Rework content to match how AI systems extract, interpret, and reuse information. Even strong content can underperform in AI-driven discovery if it is structured for traditional browsing rather than clear machine-readable extraction. If a page does not provide direct answers or is not obviously relevant to the question, it is less likely to be cited or recommended.

Restructure important pages around the kinds of questions AI systems are likely to answer. Content should provide clear responses, use structured comparison formats when relevant, and reinforce the relationship among the brand, the product, and the category.

Limy’s recommendations engine translates LLM visibility data into a clear execution plan, identifying citation gaps and high-impact topics where the brand should appear but does not. It then generates actionable content recommendations and enables teams to execute against them directly.

This goes beyond content structure or on-page optimization. Limy surfaces the specific actions needed to influence agent recommendations, whether that is creating new content or expanding presence across external channels such as PR, social, and third-party sources that agents rely on when evaluating brands. The platform not only highlights what is missing but also enables teams to act on it directly, generating and executing against these opportunities while tracking how each change impacts visibility, recommendation frequency, and downstream revenue.

9. Continuously Recalibrate Attribution Models

Treat AI attribution as a dynamic system. AI outputs change, interfaces evolve, shopping and assistant surfaces expand, and user behavior shifts with them. A model that feels sufficient today can quickly become outdated if it is not reviewed and adjusted.

Practically, this means moving to a regular recalibration cycle. Test prompts continuously, and review visibility patterns over time to identify shifts in recommendations and competitive positioning. Revisit attribution assumptions quarterly, or more frequently if the category is moving quickly.

Your team should also stay alert to new AI surfaces and behavioral changes. Attribution in an AI world is about building a measurement approach that can accommodate future changes in assistants, commerce experiences, and recommendation formats without reverting to a click-only view of performance.

The Channel You Can’t See Is Already Driving Revenue

A growing share of influence now happens before a user ever clicks, visits, or converts. That creates a structural gap between how performance is measured and how demand is generated. Most teams are optimizing based on incomplete data, missing the channel that is already shaping buying decisions. If you cannot measure AI influence, you are already underreporting your pipeline.

Limy is the only agentic marketing stack that turns AI search into a revenue channel. It tracks how your brand appears across AI systems, connects prompt-level exposure to traffic and conversions, and attributes every interaction to revenue. From real-agent behavior to strategy and execution, it closes the loop that traditional analytics cannot.

Book a demo and see how you can close your AI attribution gaps.

Most Viewed Articles